Leading AI product design for defense and intelligence, years before the generative AI boom.

DARPA contract renewal driven by design concept I pitched

30% Faster AI agent creation through workflow automation

16% Faster analyst processing through redesigned tooling

7 web apps shipped across defense and commercial clients

Development

In March 2020, "AI in product design" meant something different than it does today. Generative models weren't the interface. The phrase "prompt engineering" didn't exist in public vocabulary. "AI" meant machine learning pipelines, classification, pattern recognition, and the hard work of designing tools for the analysts doing the actual thinking.

That's what I was hired to lead.

Blue Ridge Dynamics builds mission-critical digital tools for defense, intelligence, and commercial clients. Over seventeen months as UX Manager, I led design across seven platforms, shipped three AI products, built multiple design systems for high-stakes operational environments, and managed a team of two designers who both got promoted to mid-level under my leadership.

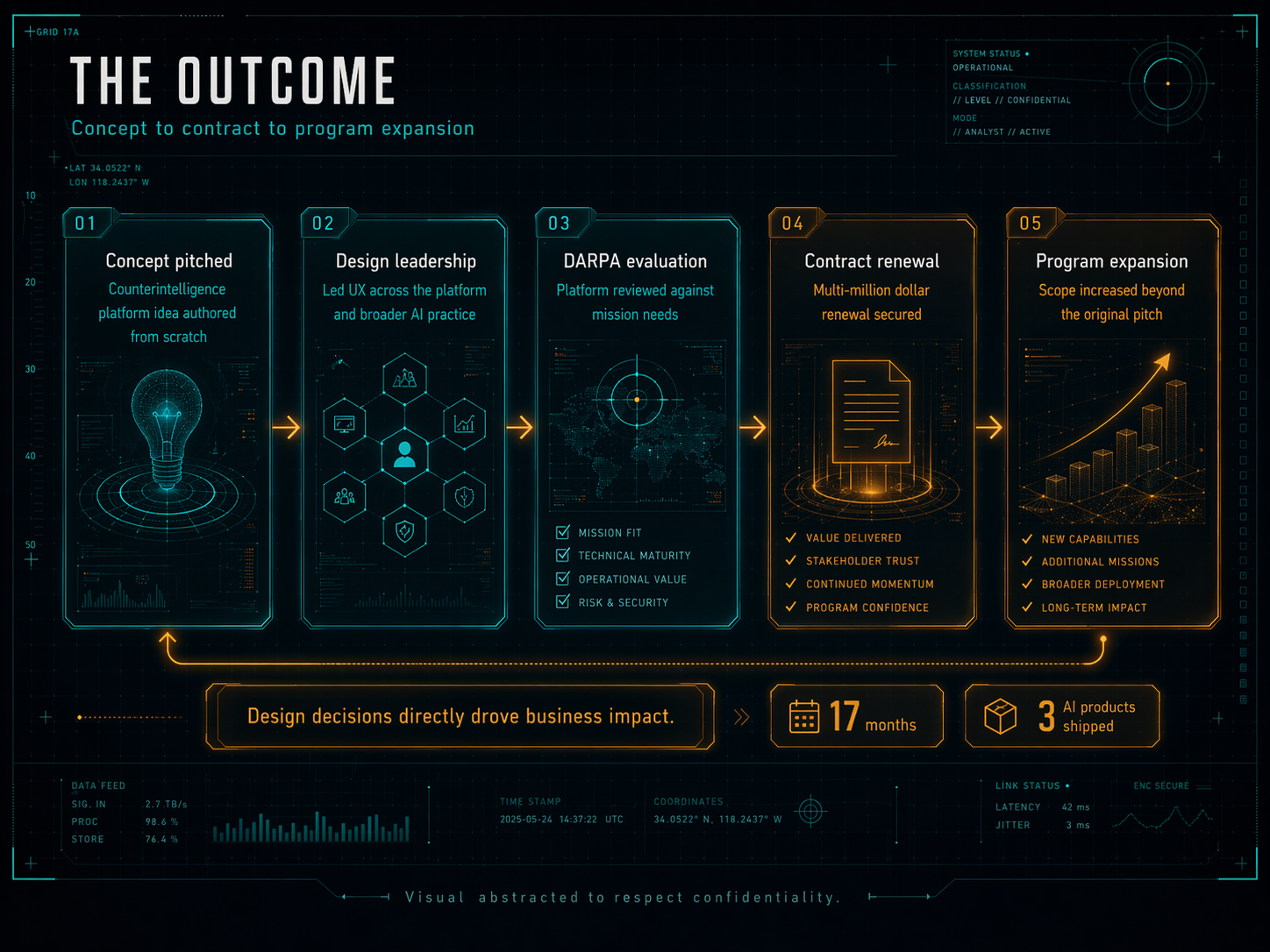

One of those projects drove a multi-million dollar DARPA contract renewal. It started as a concept I pitched. This is the case study for that work, and for the broader AI design practice that grew around it.

Role: UX Manager, Blue Ridge Dynamics

Duration: Mar 2020 to Aug 2021

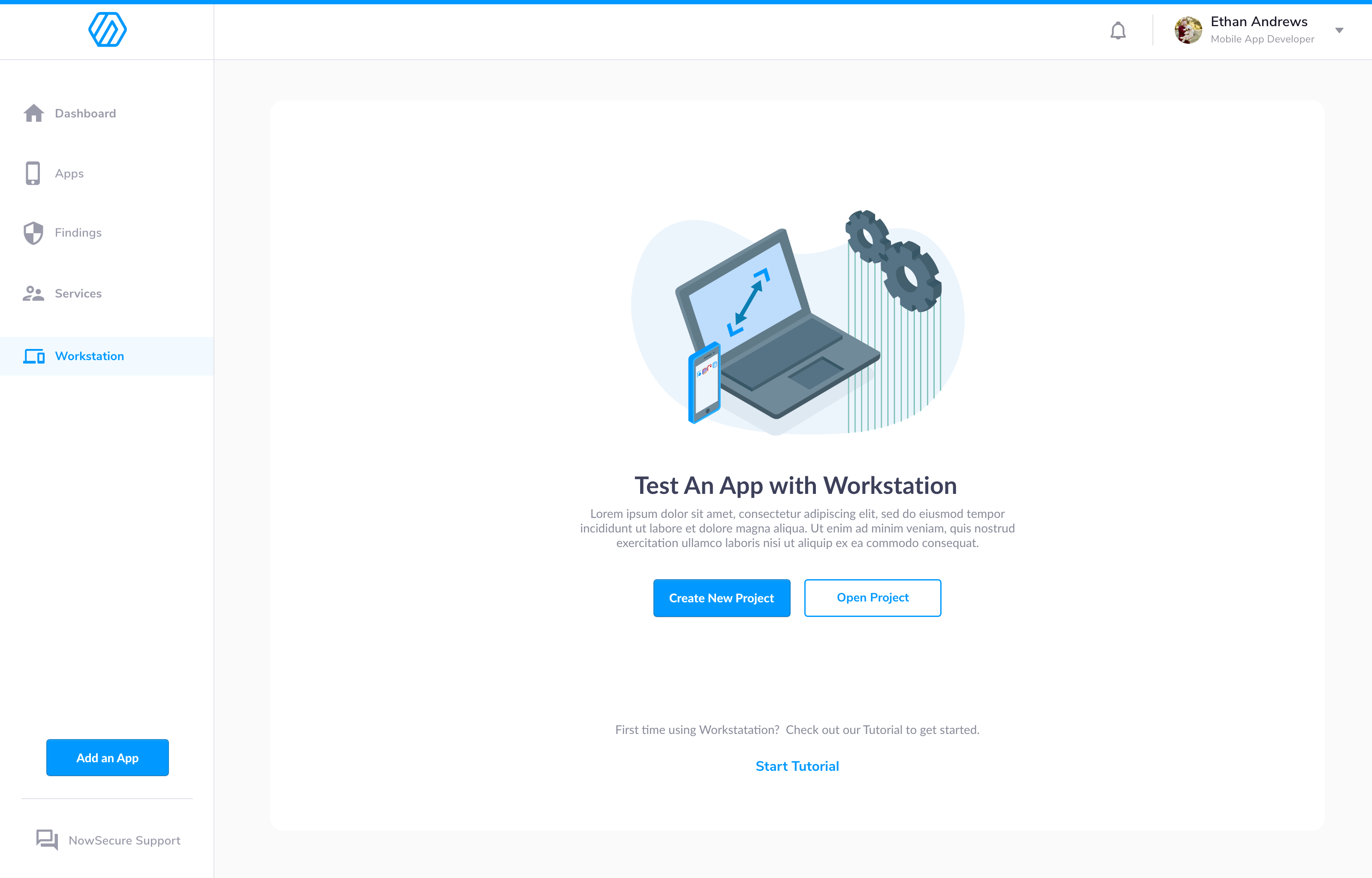

Clients: DARPA, Thomson Reuters, NowSecure, federal defense partners

DARPA came to Blue Ridge with a problem. Threat actors operating in social media environments, specifically black-hat operators targeting government assets, were hard to surface through conventional intelligence methods. They moved fast. They were wary of anything that felt surveilled. And the analyst teams trying to track them were drowning in manual workflows.

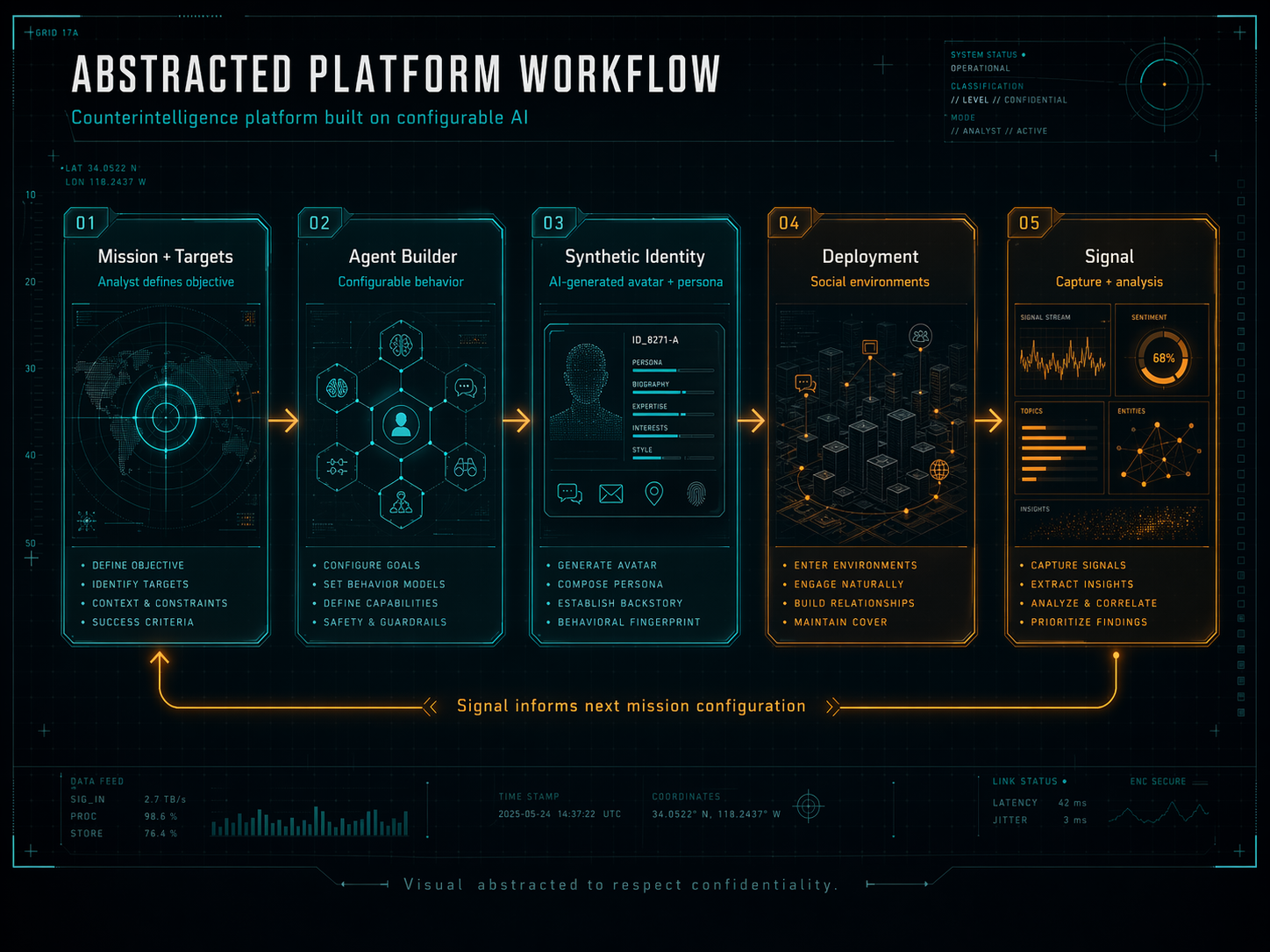

The concept I pitched was a CRM-style platform where analysts could design, configure, and deploy AI agents into those social environments, acting as believable identities that could interact, observe, and capture signal. Think of it as a configurable honeypot system for counterintelligence operations, with AI doing the persona work at a scale humans couldn't match.

The detail that makes this story genuinely unusual: we were using proprietary AI image generation tools in 2020, years before Midjourney, DALL-E, or Stable Diffusion were public. We were generating synthetic avatar faces and photographs for operational use cases when generative image AI was still a research-lab phrase. The platform let analysts configure a full synthetic identity: a face, a backstory, a behavior pattern, a deployment target. Then it let them manage hundreds of them from a single console.

The design challenge didn't sit where most product design challenges sit. It sat somewhere stranger.

The two headline efficiency gains on this project came from different places.

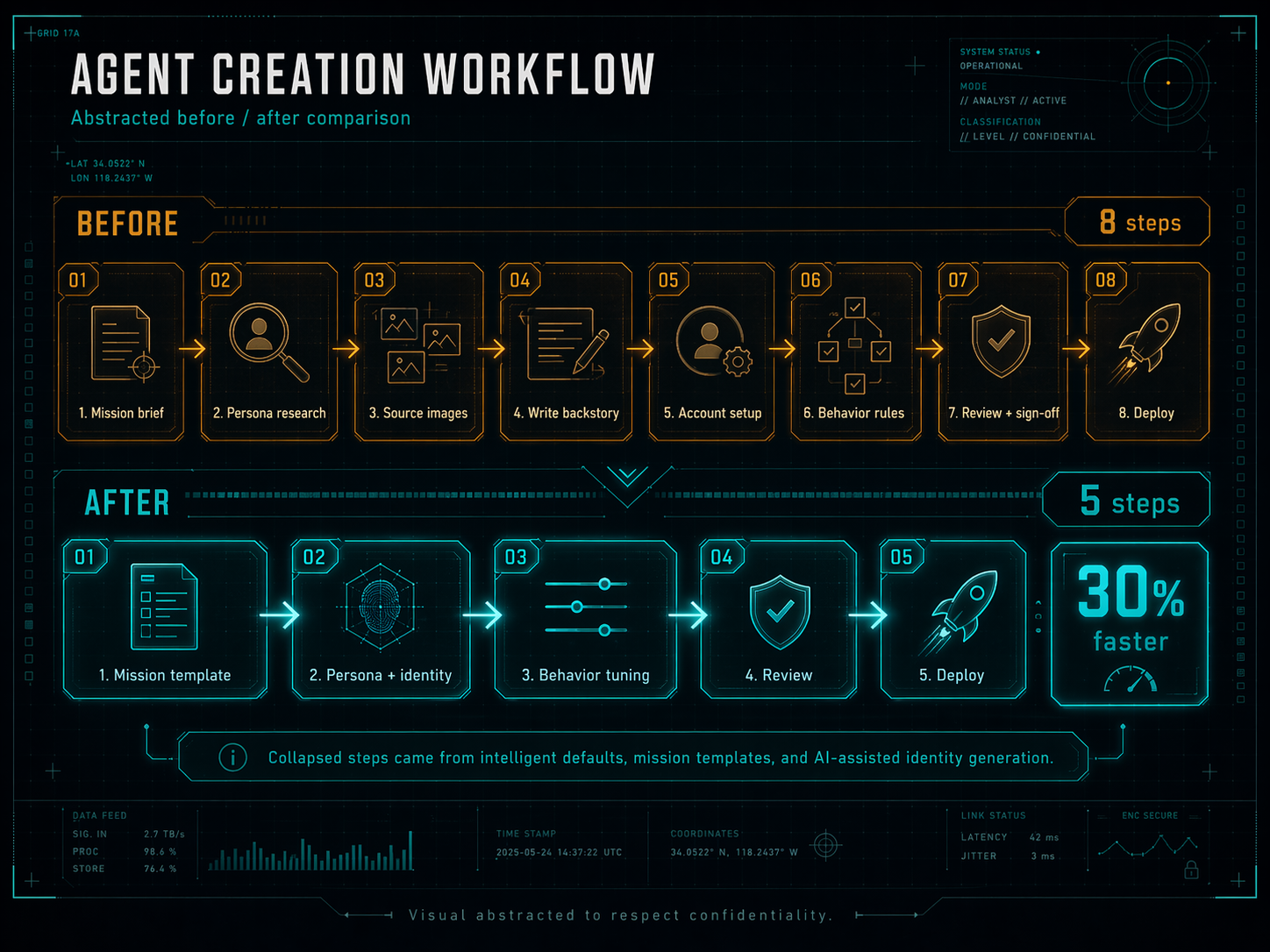

The 30% reduction in agent creation time came from rebuilding the workflow around intelligent defaults, templates for common mission profiles, and AI-assisted generation for the parts of the persona that didn't require analyst judgment (like face generation, name variants, and plausible biographical details). The analyst still drove every decision that mattered, but the busywork collapsed.

The 16% reduction in analyst processing time came from a rebuilt triage and signal review surface. Incoming intelligence used to arrive undifferentiated. I redesigned the analyst's primary view around the questions they were actually asking: what's new, what's urgent, what's connected, what can I ignore. Prioritization became the interface, not an afterthought.

DARPA evaluated the platform. They renewed the contract at a multi-million dollar level. They expanded the scope of program investment to cover additional capabilities we hadn't originally pitched. The concept I'd pitched from scratch became a program they wanted to keep building.

That's the outcome I'm proudest of from this period, because it connects design decisions directly to business impact in a domain where that connection is usually invisible. A design concept I authored drove contract revenue. That's the shortest version of the story, and the one that travels best.

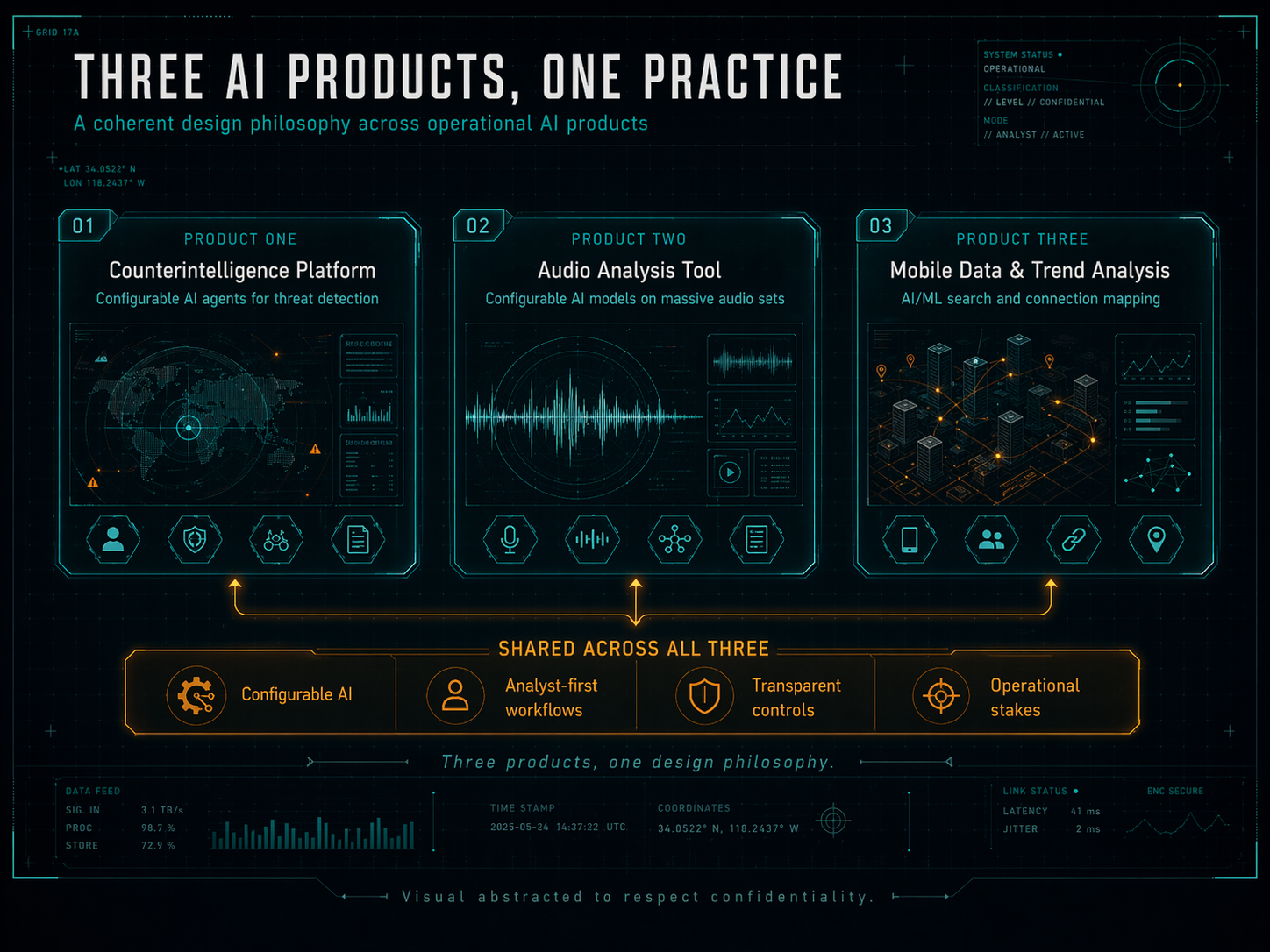

The DARPA platform was the headline. It wasn't the whole story. Two other AI products shipped during my time at Blue Ridge, and together they formed what I now recognize as a coherent design practice for AI products in high-stakes environments.

PRODUCT ONECounterintelligencePlatformConfigurable AI agentsfor threat detectionPRODUCT TWOAudio AnalysisToolConfigurable AI modelson massive audio setsPRODUCT THREEMobile Data &Trend AnalysisAI/ML search andconnection mappingShared across all three: configurable AI, analyst-first workflows, operational stakes.Three products, one practice. The common pattern across my AI work at Blue Ridge.

The audio analysis tool used configurable AI models to process, analyze, and manipulate extremely large audio datasets, collapsing what used to be hours of manual listening into minutes of machine-assisted review. It shipped as a commercial product sold to clients.

The mobile data and trend analysis platform simplified data operations across mobile devices, with AI/ML-driven search and connection mapping that let analysts trace relationships between entities that would have been invisible through conventional search. Also commercial.

Designing three AI products in eighteen months, in the same organization, for the same kind of user, taught me something I didn't have the language for at the time: there is a coherent design practice for AI products in operational domains. Configurable intelligence, analyst-first workflows, transparent controls, and trust interfaces aren't features. They're a philosophy. Every AI product I've designed since has been better for having learned this one first.

The AI work was the story. It wasn't the full scope. Across seventeen months at Blue Ridge, I led or oversaw design on seven platforms total, including:

This was also the third time in my career I'd built a design system from zero. That repetition is part of how I got fast at it. Every system I've built since has been shaped by what these ones taught me about governance, handoff, and the cost of getting it wrong under deadline pressure.

I managed two designers at Blue Ridge. Both came in at junior level. Both got promoted to mid-level during my tenure.

I mention this for two reasons. First, because design leadership is judged as much by the people you grow as by the products you ship, and this is one of the clearest examples of that in my career. Second, because mentoring in a distributed, remote, high-security environment is a different skill than mentoring in a collocated design studio. Feedback loops are slower. Critique is harder to schedule. Trust takes longer to build. I learned to be deliberate about all of it, and I've carried those practices forward into every team I've led since.

AI is an interface problem first. In 2020, when I was starting this work, most AI conversations in product were about what the models could do. The real question was always what the user needed to see to trust them. That hasn't changed. The models have gotten orders of magnitude more capable. The interface problem is the same one.

Configurable AI is more valuable than autonomous AI for operational users. Every user I designed for at Blue Ridge wanted to be in control. They didn't want the AI to decide. They wanted the AI to do work they'd configured and report back. That insight predates most of the current AI UX debates, and I still think it's the correct answer for any domain where the stakes are operational.

Generative AI in 2020 wasn't science fiction. It was a product constraint. Using proprietary image generation tools for operational use cases taught me that generative models are just tools. Powerful, fast, useful tools. Design treats them the same way it treats any other capability: figure out where they help the user, figure out where they don't, and build the surface that makes the difference obvious.

Every AI product I've designed since has been better for having learned this practice first.

Design leadership and product direction led by me as UX Manager at Blue Ridge Dynamics. Two designers on my team who contributed across the work and grew through it. Engineering, data science, and program management partnership across every project. Clients included DARPA, Thomson Reuters, NowSecure, and additional federal defense partners.