Founding an AI design practice inside an enterprise security company, and leading the design of the AI-powered remediation product that changed how developers fix vulnerabilities.

70%+ of detected flaws covered by AI-generated fixes

10 languages supported at launch

Minutes, not days time from vulnerability to fix

1st AI product shipped across the Veracode platform

Development

Application security has a compliance problem and a human problem. The compliance problem is obvious: every enterprise has to scan code for vulnerabilities. The human problem is harder. When a scanner flags a flaw, a developer has to stop what they're building, context-switch into security expertise they may not have, research the fix, and write it.

Across Veracode's enterprise customer base (Fortune 500 financial services, healthcare, government, retail) this pattern repeated thousands of times a day. Developers were drowning in security debt. AppSec teams were drowning in escalations. Every flaw that sat in a backlog was an open door.

Generative AI was the obvious lever. The question was how to design with it responsibly, in a domain where a hallucinated fix isn't just wrong. It's a security incident.

Duration 2023 to Present

Product Veracode Fix (AI code remediation)

Customers Enterprise developers and AppSec teams at McKesson, Garmin, HDI Global SE, Cardinal Health, Cox Automotive, Boston Scientific, MIT, and others

I founded Veracode's AI Innovation Group, starting as a small R&D unit exploring how generative AI could fit into enterprise security workflows. Over time, the group grew from a handful of researchers and designers into a full cross-functional product team, and is now expanding Fix's AI remediation capabilities into other Veracode products.

As design lead from zero to one, I owned the design of Veracode Fix end to end, and the design direction across the broader developer tooling ecosystem that grew around it (IDE plugins, CLI workflows, and the GitHub Action for CI/CD).

What that meant in practice:

Most AI product design starts from a forgiving domain: writing assistance, image generation, summarization. Wrong outputs are annoying, not dangerous.

Security code remediation is the opposite. A wrong fix can introduce a new vulnerability, break production, or quietly paper over the original flaw. "Pretty good on average" is a failure mode.

The design challenge sat at the intersection of three tensions:

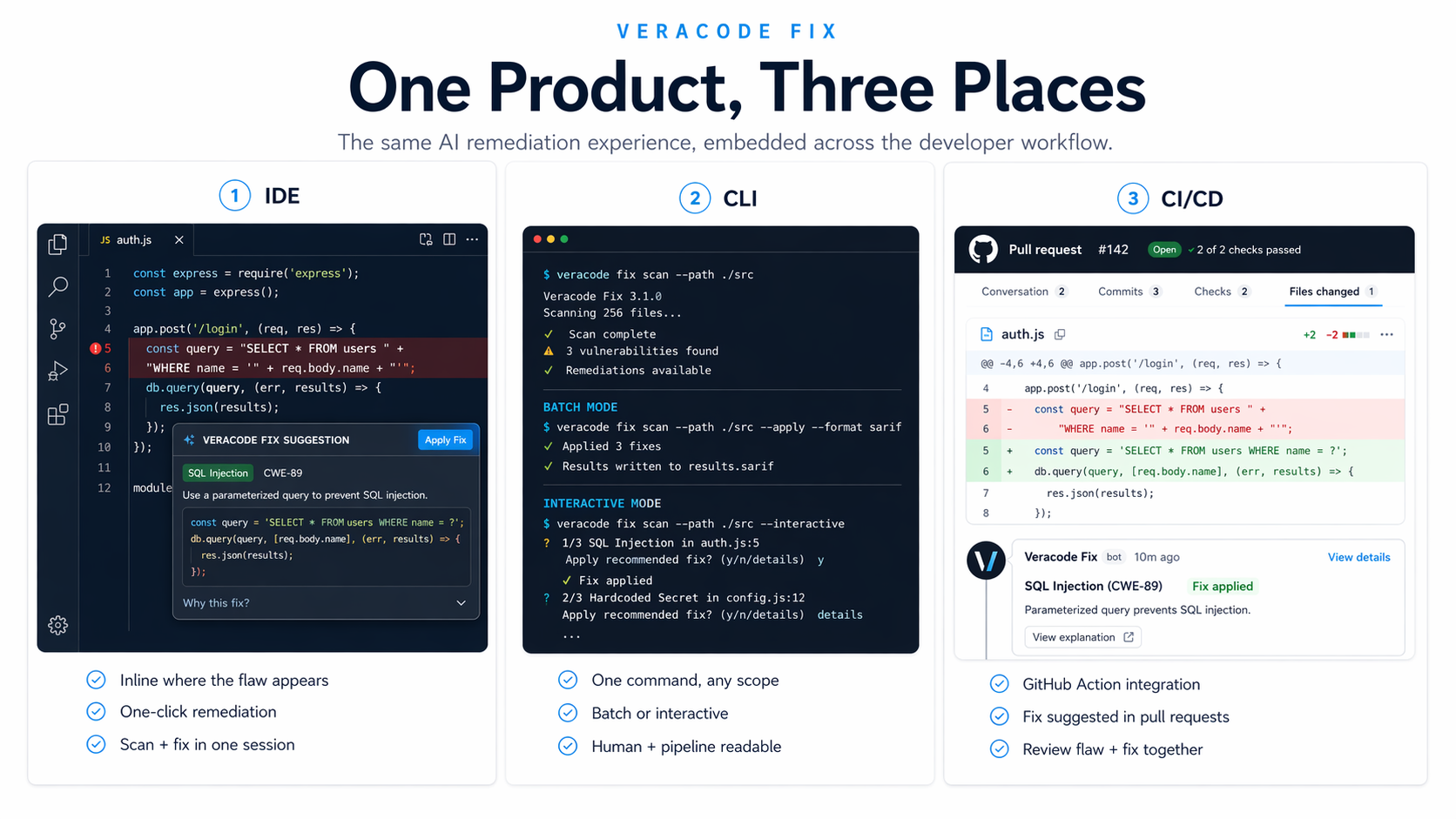

Bet 1: Meet developers where they already work. Fix lives inside the IDE (with plugins for the major editors), the CLI (for terminal-first developers and automation), and CI/CD (as a GitHub Action that runs on pull and merge). I designed the three surfaces as a single experience with shared mental models. A developer who learned Fix in VS Code could move to the CLI without re-learning how suggestions, batch mode, and flaw triage worked. Fix integrates with Veracode Pipeline Scan, so it lands exactly where developers are already receiving scan feedback, not as a separate destination they have to navigate to.

Bet 2: Show up to five options, not one "answer. "A single AI-generated fix presented as "the answer" is exactly the kind of UX that creates blind trust in AI. I pushed for the interaction model where Fix surfaces up to five suggested patches per flaw, letting developers see alternatives and choose the one that best fits their context. That small shift reframes the developer's mental model from "do I trust this AI?" to "which of these options is right for my code?" which is a much healthier relationship with the tool.

Bet 3: Interactive and batch modes, not one or the other. Single-fix interactive mode for high-stakes changes where a developer wants to review each suggestion carefully. Batch mode for repetitive, low-risk flaws where auto-applying top suggestions across an entire project saves hours of cleanup work. Same underlying intelligence, two very different interaction patterns, designed to map to how developers actually triage security work across a codebase.

The hardest design work wasn't on any single surface. It was getting three surfaces to feel like one product without flattening what made each environment useful.

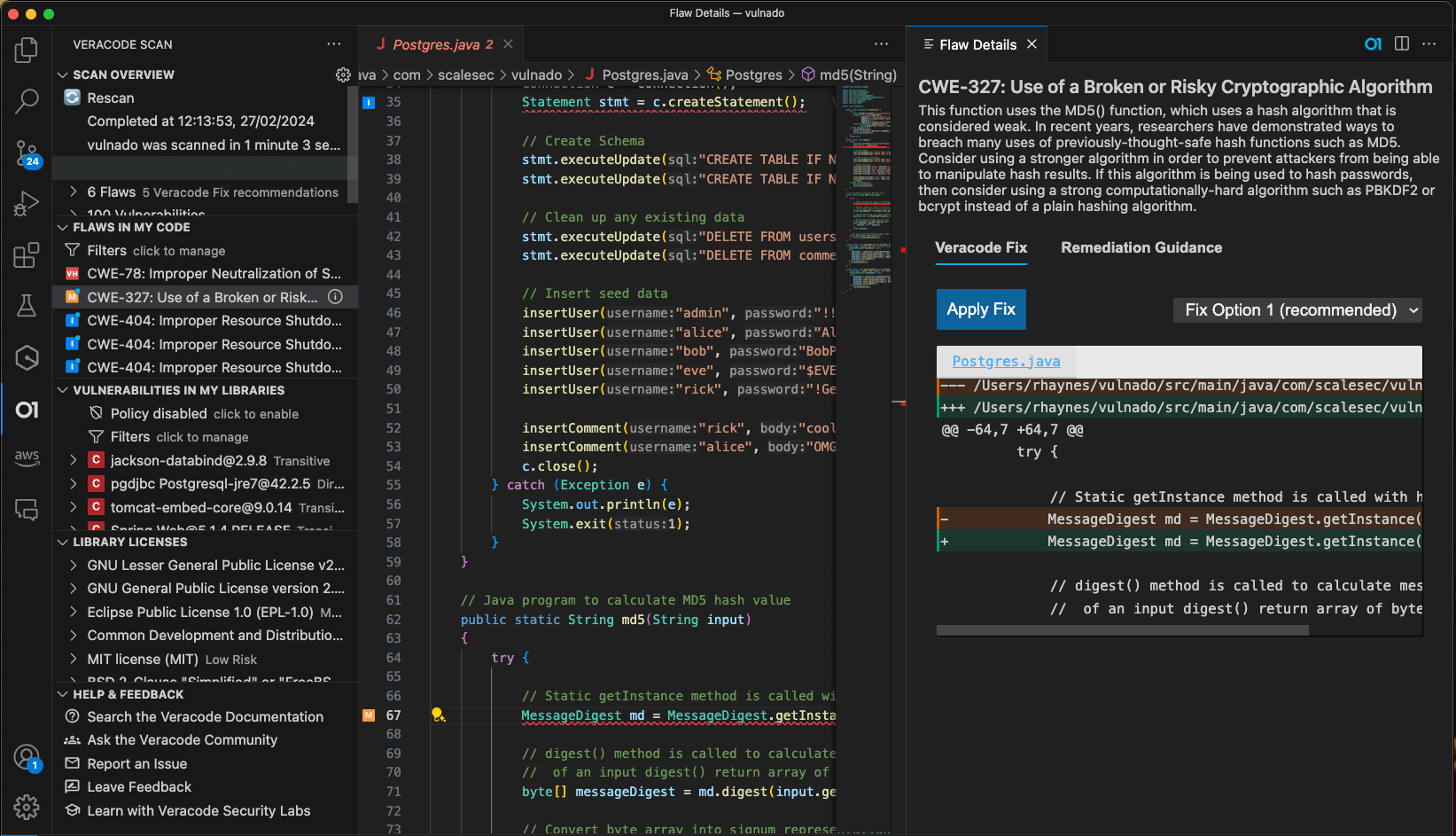

IDE. Fix shows up where the flaw does: inline in the code, with the suggestion one click away. The developer never leaves their editor. For teams that also use Veracode Static Analysis, Fix combines scanning and remediation in the same session.

CLI. A single command scans a file, a directory, or an entire project. Batch mode auto-applies top suggestions at scale. Interactive mode walks through suggestions one at a time. Output is designed to be both human-readable and pipeline-parseable.

CI/CD. The GitHub Action integrates directly into pull and merge workflows. When a PR introduces a flaw, Fix suggests the remediation as a comment on the relevant lines. Reviewers see flaw and fix in the same place they already review code.

Three design decisions I led that shaped the final shape of Fix:

Surfacing multiple suggestions instead of one. The instinct in AI products is to show the single "best" answer. That's wrong for security. Developers are the experts on their own code, not the AI, and they need to see alternatives to make a real judgment call. The five-suggestion model keeps the developer in the driver's seat and respects the fact that good code is contextual.

Designing for the developer's existing mental model. Every decision about where Fix lives, what it looks like, and how it behaves was made against one question: does this feel like a natural extension of the environment the developer is already in? If a developer had to learn a new concept to use Fix, I treated that as a design failure. The product succeeds because it feels familiar, not novel.

Making trust legible. Enterprise AppSec teams don't approve AI tools unless the tool is transparent about what it is and isn't doing. I pushed the design toward clarity at every step: clear labeling of AI-generated content, clear source attribution (fixes are grounded in Veracode's own remediation data, not hallucinated), clear accept/reject controls, and clear outcomes when a fix isn't available ("No fixes found" rather than a made-up fallback). Transparency as a UX pattern, not a disclaimer.

"One future success factor will be Veracode's artificial intelligence helping fix our findings. AI supporting fixes is a game changer. We have an approved plan for benefitting from AI, and it's time to roll it out."

- HDI Global SE (Veracode enterprise customer)

Designing AI in a zero-tolerance domain reshaped how I think about AI product work generally. Three takeaways I carry forward:

Trust is a UI problem before it's a model problem. A perfect model delivered through an opaque interface will lose to a good model delivered through a transparent one. Every AI product I design now starts with the question "what does the user need to see to believe this?"

The best AI products hide the AI. Fix works because it feels like a better IDE, a sharper CLI, a smarter PR review. The AI is doing the work, but the experience is familiar. Novelty in AI UX is almost always a design smell.

Enterprise AI adoption is bought in committees. The AppSec lead, the CISO, the developer, and the compliance officer all have to say yes. I learned to design surfaces that answered each of their questions in the same product, without cluttering the experience for any of them.

Design and AI product direction led by me, as Founder of the Veracode AI Innovation Group. Product, engineering, data science, and security research partnership from across Veracode. Special thanks to the enterprise customers and AppSec partners whose feedback shaped the product direction.